Today we took our beautiful bull mastiff to be put down. Heart-breaking, but necessary... she was over 10 years old and had been diagnosed with kidney failure. She was skipping meals and her weight had dropped to just 34 kilograms (from 51 kg a few years before).

She had a wonderful sniff around the vet's waiting room, and it was lovely to see some of her old joy and bounciness back. But it also brought back memories of her younger self. It made it all the sadder to say good-bye and stroke her head as she fell asleep for the last time...

RIP, Amber!

I'm a South African software developer based in the technology hub of Kitchener-Waterloo, Ontario. I enjoy solving mathematical and algorithmic puzzles. These are some of my thoughts...

Thursday, February 27, 2014

Sunday, February 23, 2014

Birthday Board Games Bash

Thank you!

Firstly, a big thank you to my wonderful wife for arranging a board games party on Saturday for my birthday.Around 40 friends attended, with the numbers being fairly evenly split between adults and children. We started at 10:00 am, and things went very smoothly, despite a power outage to replace some stolen electrical cable in the area.

There were 3 separate groups of friends present. I was a little apprehensive as it's always difficult to mix different social groups together. But it went really well.

Ricochet Robots

We started out playing Ricochet Robots as it can handle an arbitrary number of people and it's easy to join or leave at any time. It's a very clever competitive puzzle and it can be a real brain-burner. It's not everyone's cup of tea, but I love it!There are online versions at http://www.ricochetrobot.com and http://www.ricochetrobots.com.

The Resistance: Avalon

Two of my friends had brought along copies of Avalon (the Resistance) so we then split into two groups - one for novices and the other for the experts. I played in the expert group, but felt a bit out of my depth at first.There are a group of us who play games once a week after work. The rest of the guys have been playing Avalon a lot over the past few months and even have their own terminology now. But over that time I was temporarily seconded to another project to produce an architecture document for a client. So I have a lot of catching up to do.

A present!

Although everyone had been instructed not to bring presents, my colleagues clubbed together to buy me a present anyway. They had colluded with my wife, who had sneakily found out which games were on my most desired list. So I received a copy of Libertalia.This game has been a big hit in our weekly after-work games sessions. It's a lot of fun to play. So I was delighted!

Ultimate Werewolf

After one of the Avalon owners left the party, we ended up with around 15 people still wanting to play. Although the Resistance improves on Werewolf in just about every way, it only caters for up to 10 players. Werewolf can handle much more than that.So we switched over to a few games of Ultimate Werewolf instead. Great fun as always!

The birthday buffoon!

As numbers dwindled, we switched back to Avalon again. We ended with an absolutely excellent game, where the minions of Mordred won all 3 quests and also knew who Merlin was.I ended up being a perfect patsy for two of the minions of Mordred, who inveigled their way into the trusted group. It was so impressive to see how cleverly they pulled the wool over our eyes, that I felt honoured to have been part of the game - even if I was one of the ones who had been so comprehensively fooled by them.

Usually our monthly board games events last until late into a Saturday night, but this time everyone was gone before supper.

And thanks again!

All in all, it was a really fun way to spend a birthday. Thanks to everyone who attended, and made this an ideal birthday party for me!Saturday, February 08, 2014

Researching the Josephus problem

All posts in this series:

- One Potato: A description of the problem

- Two potato: The basic equations

- Three potato: An algebraic formula for the last person left

- Four: A neat calculation method using binary numbers

- Five potato: F# functions to check the various formulae

- Six potato: Using a calculation graph to see the pattern

- Seven potato: Generalizing to other intervals

- More: An efficient algorithm for arbitrary intervals

- Online research: In which I discover that it is called the Josephus problem

Introduction

This is the ninth post in a series about an interesting Mathematical problem known as the Josephus problem.Imagine a number of people sitting in a circle. Every second person is asked to leave the circle. This continues until only one person is left. If you label the people 1 to n, what is the label of this last person?

In the first 6 posts in the series:

- I derived a formula for the label of the last person left,

- I provided various proofs of the formula, and

- I wrote code in the F# programming language to calculate the answer in various ways.

In the seventh and eighth posts I created an efficient algorithm for calculating the answer when the number of people skipped is not two but some arbitrary interval k. But I wasn't able to find a closed formula. And I felt I had gone as far as I could on my own. It was time to see how other people had solved the problem.

This post is about what I discovered.

The problem has a name

The first thing I discovered is that the problem has a name. It is known as the Josephus problem and it is widely used in Mathematical and Programming challenges. So I will be updating the previous posts to reflect the accepted name.You can read more about the history of the Josephus problem on Wikipedia or Wolfram Mathworld.

In search of an elegant solution

My own attempt

When there are n people in the circle labelled 1 to n, the formula for the last person left in the circle is: $$ f(n) = 2n + 1 - 2^{{\lfloor log_2 n \rfloor} + 1} \tag{1} $$In the third post in the series I used algebra to prove this result. But although algebra is a very powerful tool for proving the formula, it doesn't give any insight into why the formula is true.

One of the challenges I set myself was to provide that insight. The closest I came was in the sixth post in the series, where I used a calculation graph to show the pattern of values for $f(n)$.

Chamberlain's solution is much more elegant...

Chamberlain's solution

Chamberlain's Solution can be found on page 403 of Problem Solving and Recreational Mathematics 2012 by Paul Yiu of Florida Atlantic University.His solution is based on a few key insights. I've paraphrased these as follows:

- When the number of people in the circle is a power of two, the last person left is the first person skipped. This is because:

- All the even numbered people are skipped each time around the circle

- Exactly half the people are eliminated, so the remaining size of the circle is again a power of two

- Person one will still be the first person skipped on the next traversal of the circle

- So the process repeats itself with the circle halving each time, until person one is the only person left

- Suppose the size of the circle is $2^m + r$ (with $0 \le r < 2^m$). Carry out the first $r$ removals. Then:

- The first $r$ people removed are persons $2, 4, 6, ..., 2r$

- The next person to be skipped will be person $2r + 1$

- This leaves a new, smaller circle with $2^m$ people

- So we can apply the rule from point 1 above (for a circle of size $2^m$)

- So person $2r + 1$ will be the last person left in the circle

Making it rigorous

There are a few points above which may not be obvious. Let's try to make them more rigorous:In point 2.a., the assumption is made that the labels of the first $r$ people removed would not "clock over" (i.e. one revolution of the circle hasn't been completed). Is this assumption reasonable?

$$ \begin{align*} \text {By definition: } 0 & \le r < 2^m \tag{2} \\ \text {and: } n & = 2^m + r \tag{3} \\ \\ \therefore 2r & < 2^m + r &\text{ by adding r to both sides of the inequality on the right of (2)}\\ \text{i.e. } 2r & < n \tag{4} \\ \\ \text{Also: } 2r + 1 & \le n \tag{5} \end{align*} $$

Equation 4 shows that this is a reasonable assumption.

In point 2.b. we also assumed that the next person to be skipped will be person $2r + 1$. Equation 5 proves that this label doesn't "clock over" either.

So this proves that $f(2^m + r) = 2r + 1 \text{ where } 0 \le r < 2^m$.

And this can be re-expressed in terms of logarithm and floor functions by noting that:

$$ \begin{align*} 0 & \le r < 2^m & \text{ from equation 2} \\ \therefore 2^m & \le 2^m + r < 2^m + 2^m \\ \therefore 2^m & \le n < 2^{m+1} & \text{ from equation 3} \\ \therefore m & \le \log_2{n} < m+1 & \text{ since logarithms are monotonic (order-preserving) } \\ \therefore m & = {\lfloor log_2 n \rfloor} \tag{6} \\ \\ \text{So:} \\ f(n) & = 2r + 1 \\ & = 2( n - 2^m ) + 1 & \text{ from equation 3}\\ & = 2n + 1 - 2^{m+1} \\ & = 2n + 1 - 2^{{\lfloor log_2 n \rfloor} + 1} & \text{ from equation 6} \end{align*} $$

And this is the formula given in equation 1 earlier.

A beautiful visualization of Chamberlain's solution

You can find a beautiful visual explanation of this solution on the Exploring Binary web site.Another very succinct solution

I was also very impressed by this solution. But although it was very succinct, I didn't feel that it provided the same "aha" moment of Chamberlain's solution.Generalizing the Josephus problem to arbitrary intervals

I found two algorithms for generalizing the Josephus problem to arbitrary intervals.Jakobczyk's algorithm

One of these was in a paper entitled On the Generalized Josephus Problem by F Jakobczyk. Although interesting in its own right, I'm rather going to concentrate on the other algorithm I found, as it was far more elegant...The Ahrens-Schubert algorithm

I found the first page of an article by I. M. Davids published by the Applied Probability Trust. This page gives a very elegant algorithm for the Josephus problem generalized to arbitrary intervals. But since only the first page (with the abstract) is provided, I don't have a proof for the algorithm.The Ahrens-Schubert solution uses a number series known as an Ahrens array. These are also referred to in the paper as "rounded up" arrays. They are similar to geometric sequences, except that the answer is rounded up after every step. So each term is the previous term multiplied by a factor $f$ and then rounded up to the nearest integer.

Suppose $a_0$ is the initial integer term. Then: $$ \begin{align*} a_1 & = \lceil{a_0 . f}\rceil & \\ a_2 & = \lceil{a_1 . f}\rceil & = \lceil{\lceil{a_0 . f} \rceil . f} \rceil \\ & ... \\ a_m & = \lceil{a_{m-1} . f}\rceil &= \overbrace{\lceil{ \text{ ... } \lceil{\lceil{a_0 . f} \rceil . f} \rceil} \text{ ... } .f \rceil}^\text{m times} \\ \end{align*} $$

The Ahrens-Schubert solution works by finding the largest number (say $a_{m}$) in this series which is less than $kn+1$, where $k$ is the interval to skip and $n$ is the number of people in the circle. The answer is then $ kn + 1 - a_m$.

But what are the values for $a_0$ and $f$ in the rounded up series?

Well, one neat thing about the Ahrens-Schubert solution, is that by choosing different values for $a_0$ it can determine not just the last person left in the circle, but any arbitrary $e^\text{th}$ person removed from the circle. Here are the parameters: $$ \begin{align*} f & = \frac{k}{k-1} \\ a_0 & = k(n - e) + 1 \\ \therefore a_0 & = 1 & \text{when determining the last person left in the circle (set } e = n \text{)}\\ \end{align*} $$

The Ahrens-Schubert solution for $k = 2$

Something else I like about the Ahrens-Schubert solution is how cleanly the closed formula for $k = 2$ emerges from the algorithm. When $k = 2$: $$ \begin{align*} f & = \frac{2}{2 - 1} = 2 \\ a_0 & = k(n - e) + 1 = 2(n-e) + 1 \\ \text{ or: } a_0 & = 1 & \text{for the last person left in the circle}\\ \therefore a_m & = \overbrace{\lceil{ \text{ ... } \lceil{\lceil{a_0 . f} \rceil . f} \rceil} \text{ ... } .f \rceil}^\text{m times} \\ & = \overbrace{\lceil{ \text{ ... } \lceil{\lceil{1 . 2} \rceil . 2} \rceil} \text{ ... } .2 \rceil}^\text{m times} \\ & = 2^m &\text{since the ceiling falls away as all terms are integers} \end{align*} $$ So the answer is $2n + 1 - 2^m$ where $2^m$ is the largest value of $a_i = 2^i$ less than $2n + 1$. And this reduces to equation 1!

My implementation of the Ahrens-Schubert algorithm

Below is my implementation of the algorithm in F#:

let rec getLargestTermInRoundedUpGeometricSeriesBelow

(startValue:int) (factor:double) boundary =

if startValue >= boundary then

raise (

System.ArgumentException(

"The starting element in the series is not below the bound!"))

else

let nextValue = int (System.Math.Ceiling( (double startValue) * factor ))

if nextValue >= boundary then

startValue

else

getLargestTermInRoundedUpGeometricSeriesBelow nextValue factor boundary

let calcEliminationNumberInCircleWithIntervalByRoundedUpSeries

eliminationIndex interval sizeOfCircle =

let dInterval = double interval

let factor = dInterval / (dInterval - 1.0)

let bound = interval * sizeOfCircle + 1

let initialValue = interval * (sizeOfCircle - eliminationIndex) + 1

bound - getLargestTermInRoundedUpGeometricSeriesBelow initialValue factor bound

let calcLastLeftInCircleWithIntervalByRoundedUpSeries interval sizeOfCircle =

calcEliminationNumberInCircleWithIntervalByRoundedUpSeries sizeOfCircle interval sizeOfCircle

Performance of the Ahrens-Schubert algorithm

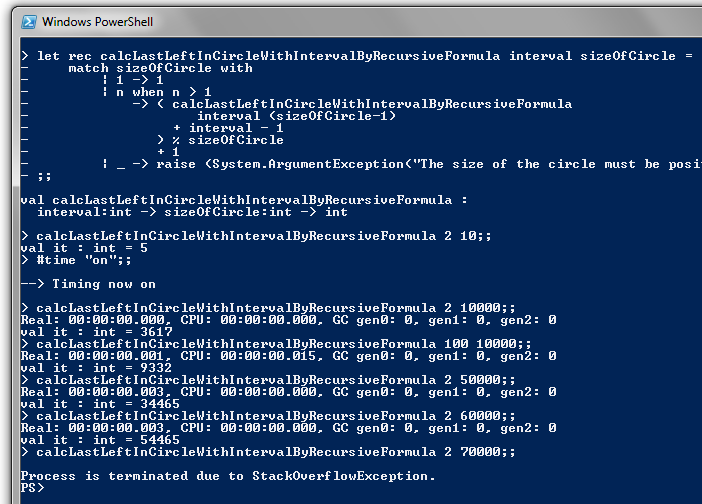

Below are timings for the algorithm:

These are the same inputs as I used in my own algorithm from post 8 of the series, shown below:

Comparing the timings, you can see that the Ahrens-Schubert algorithm is noticeably faster. It took 0.9 seconds to my algorithm's 1.2 seconds when k = 2, and 2.4 seconds versus 2.6 seconds when k = 100.

Other references

Code to solve the Josephus problem

Implementations in a variety of languages can be found on the Rosetta Code web site.More research on the Josephus problem

I found an extensive list of references to the Josephus problem on the puzzlemuseum.com web site. Professor David Singmaster has provided a list of articles on recreational Mathematics. Page 4 of source document 3 lists a large number of articles on the Josephus problem.Conclusion

In this series of articles, I have tackled and successfully solved the Josephus problem. This includes finding an efficient algorithm when the problem is generalized so that an arbitrary number of people is skipped.I have discovered that this is a well-known problem with an extensive history and a number of explanations and algorithms, including two that were extremely elegant. This makes it an ideal problem to tackle yourself, as there are extensive online resources to compare your approach against.

Using F# to implement various algorithms has been particularly rewarding. This has piqued my interested in functional programming. As a result, I have started dabbling in Haskell. I also recently completed Martin Odersky's Functional Programming Principles in Scala course on Coursera and the follow-up course on the Principles of Reactive Programming. These are thoroughly enjoyable courses, which I recommend highly.

But I'm glad to be finally wrapping up this series on the Josephus problem. It's definitely time to move onto something new!

Sunday, September 22, 2013

The 2013 Entelect AI Challenge Play-offs

Introduction

Last Saturday evening (the 14th of September), I attended the play-offs of the 2013 Entelect Artificial Intelligence Programming Challenge.Last year the theme was based on the Tron light-cycles game, but on a sphere instead of a grid. This year the theme was inspired by the 1980's tank warfare game, Battle City.

Here's a whirlwind summary of the game rules...

There are 8 fixed boards ranging in size from about 61x61 to 81x81. Each player has 2 tanks. A play wins by either shooting or driving over the enemy base. Bullets move at twice the speed of tanks, and tanks can shoot each other and shoot through walls. Tanks occupy a 5x5 area. Bases and bullets occupy a single cell. When a wall is shot, the two walls on either side are also destroyed (thus allowing a tank to shoot a path through the walls). If tanks try to move into a wall or another tank, the tank will turn but not move. A tank can only have one bullet in play at a time. So the tank is effectively disarmed until its bullet hits a wall, another tank, a base or the edge of the board. Both players move simultaneously and there are 3 seconds between turns. The players communicate with the game engine via SOAP web service calls.

Last year there were over 100 competitors, but this year the challenge was definitely a few notches up in complexity. In the end there were only 22 contestants. So I was expecting the play-offs to be much quieter than last year. But fortunately it wasn't like that at all.

As with last year's competition the camaraderie was superb. There was a lot of lively chatter. And it was great to put faces to some of the people I had been interacting with on the unofficial google groups forum for the competition.

My path to the play-offs

My first bot

Although I worked hard on my bot, I had also been working crazy hours at work and I was still quite tired. But I was given a couple of days off work to compensate for the long hours I had been working. So I don't think the hours at work affected my productivity too much (I ended up writing over 15 000 lines of code in the 7 weeks of the competition). But I think I made more careless mistakes than I usually would.Between my own bugs and quite a few bugs and race conditions in the official test harness, I lost most of the final week of the competition to bug fixing. I found myself with a number of really nice algorithms, but with no bot to make use of them!

The result is that I very nearly didn't submit a bot at all. My initial entry used a very basic heuristic of having one tank take the shortest path to attack the enemy base and the other tank attack the closest enemy tank. It had very basic bullet avoidance code which I added at the death (so to speak), and which hadn't been adequately tested.

At that stage I had given up on doing well. My main reason for entering was to attend the play-offs, meet up with some of the other contestants and enjoy the superb spirit of the event. I had very low expectations for my bot and I named it "Vrot Bot".

Vrot Bot

"Vrot" is the Afrikaans word for rotten. Like many Afrikaans words it has become a standard part of English slang in South Africa.It is pronounced like the English word "fraught", but with a short vowel sound and a rolled "r". But if you're an English-speaking South African, you will mis-pronounce it slightly so that "vrot" rhymes with "bot". So it will sound like "frot bot".

The entry date gets extended

Due to the small number of competitors and some technical issues with the test harness, the competition ended up being extended twice.This gave me an extra week to put a much more sophisticated bot together. However it was only in the last 24 hours of the competition that my new bot ended up beating the original vrot bot convincingly. And a lot of the new code had not gone through nearly enough testing, so it was bound to be buggy.

On that basis I decided to keep the name Vrot Bot.

Testing, testing, testing...

Last year I came fourth in the competition. My secret sauce was building a WPF user interface that allowed me to play against my bot, save and load games, rewind games to arbitrary points in their history, visualize a variety of parameters at each cell of the board and drill down into the search tree of moves (I used the NegaMax algorithm to choose moves). I ended up with a very robust, well-tested bot.Although I had planned to do something similar this year, the competition had a much shorter duration than last year and I never quite got there. At one point I had a choice between writing my own test harness and using the official test harness provided by the organisers.

I chose to use the official test harness, because I was concerned about the risk of integrating a C# WCF web service client with the SOAP web service exposed by the Java test harness. So I wanted to test that integration as thoroughly as possible, and my own test harness wouldn't have provided that capability.

I ended up using a variety of simpler methods for testing my bot's logic. However this made the test cycle much slower, and I paid the price in having a much buggier bot. There were many parts of my code which were very poorly tested because I simply didn't have a quick and easy way of forcing the game into the scenario I wanted to test.

If there was only one thing I could change about my approach to this year's competition, it would be to write my own test harness (as well as using the official test harness for testing integrations). That would have given me the ability to load a previously played game at any point in its history, instead of having to wait minutes for the harness to reach the turn where I knew the bug to be.

Performance tuning...

Last year my bot's main weakness was its slow performance. The first and third-placed players both used C++ for their bots, so having good performance gave a distinct competitive advantage. So this year I put a lot more effort into tweaking the performance of my bot.There's a very true saying that premature optimization is the root of all evil. I was well aware of this. However I also felt that to get really good performance the data structures would need to be designed with performance in mind. And that's something that's not easy to change later if you get it wrong.

With hindsight I shouldn't have spent as much time worrying about performance. The search space for this year's challenge was massive. That meant that a brute force approach was far less valuable than last year. My time would have been better spent improving my testing capability.

The play-offs

The 22 contestants were grouped into 4 round robin pools with 5 to 6 players per pool.Many of the matches were decided on silly mistakes, so I think many of the other contestants had also been struggling with the same issues: the shorter competition timeframe, a much more complex problem space and difficulties with adequately testing their bots.

My bot performed much better than I was expecting. I topped my pool quite easily, winning 4 of my 5 pool matches.

Strangely enough, my bot seemed to be a lot more decisive than I was expecting. But I didn't think too much of it at the time.

My one loss was on a smaller, fairly open board. Bullet-dodging was something I had tested quite thoroughly. Yet my tanks ran straight into the enemy tanks' bullets instead of dodging them. That was also quite surprising.

My bot's algorithm is based on running through a number of scenarios and, for each applicable scenario, calculating a value for each of the 6 possible tank actions for each tank. At the time I suspected that a number of scenarios had combined to create a cumulative value for moving forward that was greater than the value of either dodging or shooting approaching bullets.

It was only a few days later that I discovered the real reason for the lack of bullet-dodging behaviour... but I'll get to that later.

The elimination round

I was expecting the top 2 players in each pool to go through to the finals at the rAge computer gaming expo on 5 October. However there was a twist in proceedings...The players were seeded based on how many games they had won in the pool stage. The top 16 seeds went into an elimination round. The elimination round worked as follows: seed 1 played seed 16, seed 2 played seed 15, and so on. The winners of those 8 matches would go through to the finals at rAge.

Although I was one of the top 5 seeds, I had a sinking feeling at this point as a single loss could knock one out the tournament. Imagine my feeling when I saw the board for my knock-out game... It was the same board as my one loss in the pool rounds! And sadly the result was the same... my tanks raced towards the enemy tanks, ate a bullet each and I was knocked out the competition!

I was disappointed and a little irritated that everything had hinged on a single game. At least last year the format was a double elimination, so a single bad game couldn't knock a good bot out of the competition.

On the other hand, my expectations had been low to begin with. So the end result wasn't different from what I had been expecting. And I had the added satisfaction of knowing that my bot had generally been much stronger than expected. So all I could do was be philosophical about the loss...

The debacle of the official test harness

Bots that froze

Something surprising and quite sad happened to some contestants. On start-up their bots froze and did nothing for the rest of the game.Last year's winner, Jaco Cronje, had this problem on a number of boards. But he won easily when his bot played. Fortunately he won enough games to be one of the lower seeds of the top 16, and during the knockout round he got the board that his bot did not freeze on. He won that game easily and made it through to the finals.

Another competitor, Bernhard Häussermann, had a similar issue which prevented his tank from moving in any of his games. This is very sad when you consider how much effort people put into their entries for the competition.

But was it really his own error that led to the frozen tanks?

The mysterious case of the frozen tanks

In the week after the play-offs Jaco and Bernhard went on a mission to identify the reason for their tanks freezing.During the final weekend before submission, Jaco had set his bot running through the night to check for weird issues. It had run fine. The competition organisers had said they would use the same test harness to run the competition as they had provided for the players to test against. So how was it possible for Jaco to get a different result during the play-offs?

The details of their digging are on this page of the google group for the competition. Here's the short version...

A number of players had noticed that the id's of the 4 tanks were always 0, 1, 2 and 3. One player would get tanks 0 and 3. The other player would get tank id's 1 and 2. Since tank movement was resolved in the order of tank id's, this ordering made it a little fairer to choose who got precedence when two tanks tried to move into the same space on the same turn.

But in the play-offs the test harness started assigning very large tank id's. This caused out of range exceptions for some of the bots when they tried to save the tank information into a much smaller array.

Perhaps the test harness was modified to run multiple games without requiring a restart, instead of the correct approach of building a "tournament runner application" which would re-start the unmodified test harness between games.

So why didn't my tanks dodge bullets?

On Wednesday I had a hunch about why my bullets didn't dodge bullets. And why they seemed to behave differently to what I'd been expecting.So on Wednesday evening, after getting home from a Data Science meetup group, I put my hunch to the test.

I simulated a tank id outside the range of 0 to 3. As expected, this caused my bot to also throw an out-of-range exception in the part of my code which configures tank movement sequences.

The reason my bot didn't freeze is that I had put in a fail-safe mechanism to catch any errors made by my bot.

If an error occurred, I would see if my bot had made a move yet. If it hadn't, I would run a very simple algorithm so that it would at least make a move rather than just freezing. My closest tank would attack the enemy base, and my other tank would attack the closest enemy tank. There was no bullet dodging logic, as I didn't want to risk running any extra code that could have been the cause of the original error.

So this explains why my bot had failed to dodge bullets. And also why it had acted so single-mindedly.

I had worked so hard on my scenario-driven bot in the final (extended) week of the competition. And it probably didn't even get used due to this bug. How sad. Since I still won my pool with this very basic bot, imagine what I could have done with my much more sophisticated bot!

[Bernhard was in the same pool as me. So without the tank ID bug I might not have won the pool. But at least my best bot would have been on display.]

To be or not to be bitter

So at this point I have two choices...Jaco and Bernhard have decompiled the jar file for the official test harness, and there should be no way that the test harness can assign a tank id out of the range 0 to 3. So the organizers must have changed the test harness after the final submission date, in which case it nullifies its purpose as a test harness. I could choose to be bitter about this.

My second choice is to suck it up and learn from the mistakes that I made. And I can only do that if I don't take the easy way out by blaming someone else for what went wrong (tempting though that is).

I used a variety of defensive coding practices, including my fail-safe algorithm. But I failed to code defensively against the possibility of the tank id's being outside the range of 0 to 3. I have a vague memory of feeling very uncomfortable at making this assumption. But I made the poor judgement call of ignoring my intuition and going with a cheap solution rather than the right solution (or even just a cheap solution with more defensive coding). That mistake is my mistake, and it's something I can learn from.

Keeping the goal in mind

The big picture with entering competitions like this, is that you are not doing it for the prize money or the fame (though those are useful motivators to help give you a focus for your efforts). Doing so would be a form of moonlighting and is arguably unethical.To some extent you are doing it for the fun and the camaraderie - the opportunity to interact with smart developers who enjoy challenging themselves with very tricky problems. But I get similar enjoyment from playing German-style board games with my colleagues and friends - and with far less investment of time.

However the most important reason is for the learning experience. You are exposing yourself to a challenging situation which will stretch you to your limits, and in which you are bound to make some mistakes (as well as learn new coding techniques). The mistakes you make and the lessons you learn are part of your growth as a programmer.

With this perspective I would rather learn from my mistakes than look for someone to blame.

The alternative is to become bitter at the injustice of the situation. But life is full of injustice, and in many cases (such as this one) it is not caused by malevolence, but simply by human fallibility. As software developers we know all too well how seemingly innocuous changes can have unexpectedly large side-effects.

I'm not advocating that we should lower the standards we set for ourselves and others. But I think we should balance that against having the maturity to forgive ourselves and others when we make unfortunate mistakes despite our best intentions.

The most valuable lesson I learnt

The most valuable lesson learnt was not about coding more defensively. Or about making testability a primary consideration. Or to avoid premature optimization. Or even to listen to my gut feeling when I'm feeling uncomfortable about code that I'm writing (valuable though that is).Those are all things I already knew. As the saying goes, experience is that thing that allows you to recognise a mistake when you make it again!

The most valuable lesson was a new insight: beware of the second system effect!

Last year I placed fourth in the challenge with a fairly standard NegaMax search tree approach. I was conservative in my approach, did the basics well, and got a great result! But I had some innovative ideas which I never got as far as implementing...

This year I wanted to do better than last year. And I wanted to push the envelope more. And as a result I fell foul of the second system effect, and did worse than last year.

And this is not the only recent instance of this happening...

In 2011 I did really well in the UDT Super 15 Rugby Fantasy League with a simple statistical model (built in Excel) and a linear programming model for selecting my Fantasy League team each round. And I placed in the 99.4th percentile (183rd out of around 30 000 entrants)!

In 2012 I wanted to do even better, so I used the R programming language to build a much more complex statistical model. I put a lot more effort into it. Yet I only placed in the 90th percentile. The second systems effect again!

In both cases I learnt far more from my second system than from the more conservative (but more successful) first system. So it's not all bad, since learning and self-development is the primary goal. But in future I'd like to find a better balance between getting a good result and "expressing my creativity".

Wrap-up

As one of the eight finalists in the 2012 competition, I ended up being interviewed after the play-offs last year. That interview can be found on youtube.Although I didn't make it to the final eight this year, I still found myself being roped into an interview. I'll update this page with the link once that interview has been uploaded to youtube.

For anyone who's interested, I've also uploaded the source code for my bot entry on GitHub.

There's more detail on the approach that I and the other contestants took at this page on the google groups forum.

What's next?

At some point I'd like to post a blog entry on my shortest path algorithm, as I came up with a neat trick to side-step the various complexities of doing a Dijkstra algorithm with binary heaps, pairing heaps, r-divisions on planar graphs, and so forth.But first I'd like to wrap up my series of posts on an interesting problem in recreational Mathematics known as the Josephus problem.

Friday, September 13, 2013

The past two months...

A break from blogging

Over the past 2 months I've taken a break from blogging.During that time I've spent a week in the Kruger park, worked crazy hours on a software architecture investigation for a client, and worked equally crazy hours in my spare time on the 2013 Entelect Artificial Intelligence competition.

This blog post is a recap of those 3 activities.

The Kruger Park

I took my family camping at Punda Maria campsite in the Northern section of the Kruger Park from 14 to 19 July.Back in 2008 we had one or our best Kruger trips ever in that part of the park, seeing no less than 4 leopard, including an excellent sighting where the leopard was metres from our car. So we had extolled the virtues of Northern Kruger to our friends John and Sarah, who were accompanying us with their 3 children.

|

| A leopard sighting from our trip to Northern Kruger in October 2008 |

In my opinion, Northern Kruger is the most beautiful part of the Kruger park, particularly the area near the Pafuri picnic site and along the Nyala drive. So although we were disappointed at not seeing any of the big cats, we still enjoyed our visit immensely.

Northern Kruger has a reputation for being a birders' paradise, and we certainly saw our fair share of beautiful birds. I even managed to capture a Lilac-Breasted Roller in flight, something I had tried many times before without success.

|

| A lilac-breasted roller in flight |

|

| A korhaan sighting on the way back from Pafuri |

|

| A hornbill that scavenges left-overs at the Babalala picnic area |

An architectural investigation

On returning from Kruger, I jumped straight into a 4 week architectural investigation for a client. The client was concerned about their dependence on a 3rd party integration hub whose host was experiencing financial uncertainty. My role was to assist the client in understanding their IT ecosystem, assess their and their business partners' dependencies on the 3rd party vendor, and provide a roadmap (with estimated costs) for mitigating the risk.This is very different from the work that I do on a day to day basis. Most of the time I am one of the software designers/architects on a team of 25 to 30 developers, testers, business analysts, architects and project managers. I spend my day doing UML diagrams for new features, assisting developers when requested, estimating task sizes, performing code reviews, troubleshooting performance issues, and so forth. In other words, I am very much a cog in a bigger machine.

With the architectural investigation I was on my own. I had complete autonomy to carry out the investigation as I saw fit. I love doing investigative work. I love work that has strategic impact. I love feeling my way towards a solution. So I revelled in this independence.

Although exciting, it was very intense work too. On Friday 16 August I gave a presentation of my findings to the technical stakeholders. I worked right through the night on both the Tuesday and Thursday night beforehand, clocking over 25 hours of work on Tuesday and Wednesday, and over 27 hours on Thursday and Friday.

I've done all-nighters before in my career. But never two in one week. It's definitely not something I would recommend...

I was very happy with the outcome of the work though, and the presentation seemed to go very well, despite getting off to a slow start due to my lack of sleep and the adrenalin of putting the finishing touches to the presentation only minutes before the meeting started!

The final presentation to the executive committee took place the following Tuesday. The previous presentation had included a lot more technical detail, and the focus had been on presenting a wide variety of options. The final presentation was much more condensed, and focused on 2 primary recommendations. The presentation went extremely well, and the feedback was very positive - both from the customer's CFO and from a number of my superiors at Dariel.

A few days later I was asked whether I would like to do similar investigations in future. I replied that this was similar to asking someone who has just completed the Comrades Ultra-marathon whether they would like to do it again next year!

The 2013 Entelect AI Challenge

Last year I participated in the inaugural Entelect R 100,000 Artificial Intelligence Challenge. I was delighted to place 4th out of 101 contestants.I was really hoping to better that this year. However the 2013 challenge took place over the same period as the trip to Kruger and the architectural investigation. Additionally, last year's contestants had from mid-July until 24 September to submit their entries. This year the closing date was 2nd September. So there was simply less time to recover from the long hours on the architectural investigation.

This year's competition was to program two tanks to take on two other tanks on one of 8 boards, up to 81x81 squares in size, in a simultaneous movement tank battle based loosely on the 1980's arcade game Battle City, and using SOAP web services to communicate to the server. This was a massive jump in complexity from last year's competition, which was a Tron-like turn-based game with one unit per player, played on a 30x30 sphere with communication via reading and writing a text file.

If I was a wiser man, I would have thrown in the towel before even starting. Fortunately I'm not!

I started my career as an Operations Research consultant at the CSIR and I still retain a strong passion for decision optimization and decision automation. My outlet for that passion is entering competitions like the Entelect AI Challenge.

It's also a great way to keep my coding skills current, as my role as a software designer means that I don't get to write as much production code as I would like.

So, against my better judgement, and despite all obstacles, I have participated in the Entelect programming challenge again this year! It's been very tiring and the stress levels have been immense. I have barely lurched over the finish line, assisted in no small measure by the final entry date being extended by a week from 2nd September to Monday 9th September.

But at least I have managed to complete an entry. And one that I'm reasonably proud of, even though I don't think it will be good enough to get me to the final 8 like last year.

The camaraderie last year was amazing, and the way the contestants have assisted and encouraged each other in this year's competition has been no less amazing. You would never guess we were competing with each other for a prize of R 100,000 (roughly $10,000). That's a lot of money and the prize for second place is tiny by comparison. But you'd never guess it by the way contestants have generously shared advice with each other, and encouraged each other to keep going when it gets tough. And believe me, this has been a very tough challenge this year (as shown by the far smaller number of people who got as far as submitting an entry).

The play-offs for the competition take place tomorrow evening at the Fire and Ice Protea Hotel in Melrose Arch, Johannesburg. I will be there to share in that spirit of camaraderie again.

Although my expectations for my bot are low, my hopes are high. I'm hoping that my bot gives it horns...

... and that some remaining bug doesn't sabotage my efforts and leave me meekly hiding in the shadows!

What comes next?

I'm still busy with a couple of blog postings for the problem of calculating the last person left in a circle if every second person is asked to leave. I hope to post those shortly and close off the series.I worked out a few very nice algorithms for the Battle City AI challenge. So I'd like to write a series of articles on those as well. I'd also like to analyse some of the mistakes I made in the competition, and what I would do differently if I could start over.

And once my bot is knocked out the competition I'm planning on posting the code for my entry to GitHub.

I suspect this will be of interest to the other contestants in the competition (we have already been doing post-mortems of our strategies on the unofficial google groups forum for the competition). But it may also have a few nice ideas that will be useful to people competing in other AI competitions in future.

So please keep a lookout for those!

Sunday, June 09, 2013

More (an efficient circle elimination algorithm for arbitrary intervals)

All posts in this series:

- One Potato: A description of the problem

- Two potato: The basic equations

- Three potato: An algebraic formula for the last person left

- Four: A neat calculation method using binary numbers

- Five potato: F# functions to check the various formulae

- Six potato: Using a calculation graph to see the pattern

- Seven potato: Generalizing to other intervals

- More: An efficient algorithm for arbitrary intervals

- Online research: In which I discover that it is called the Josephus problem

Introduction

In my previous post I generated a recursive equation for calculating the last person left in a circle if every $k^{\text{th}}$ person is removed from the circle.I also wrote an F# program which highlighted a weakness of this algorithm. For circles with more than 60 000 people, the F# algorithm would experience a stack overflow.

In this post I am going to develop a more efficient algorithm to address this flaw.

Warning: Lots of algebra ahead!

The initial formatting of the Mathematical equations can be slow. If so, please be patient.

The recursive equation for arbitrary intervals

To recap, here is the main equation from the previous blog post: $$ f_k(n) = [f_k(n-1) + k - 1 ] \bmod n + 1 \tag{4} $$[This is very much a continuation of the previous post, so I have gone with the same equation numbering].

Arranging the function outputs in rows

Recall the calculation graph from post 6 in the series:Notice how $f(n)$ "clocks over" for the first value in each row.

Let's arrange the values of $f_k(n)$ into rows in a similar way. To do this I am going to consider the values of $n$ at which $f_k(n)$ clocks over. Every time this happens, a new row will be started.

Let $r$ be the index of a row in the graph.

Let $n_r$ be the first value of $n$ in row $r$.

Now consider the previous value of $n$: $n_r - 1$. Since the modulo operator clocks over for $n_r$, we can take the argument to this operator in equation 4, and we must have:

$$ \begin{align*} f_k(n_r - 1) + k - 1 &\ge n_r \\ \therefore f_k(n_r - 1) &\ge n_r - (k - 1)\\ \end{align*} $$

However, we also know - by the definition of $f_k(n)$ - that:

$$ f_k(n_r - 1) <= n_r - 1 $$

So it must be that:

$$ \begin{align*} f_k(n_r - 1) &= n_r - j & \text{for some } j \in \mathbb{N} \text{ such that } 1 \le j \le k-1 \tag{5} \end{align*} $$

From our definition of $n_r$ we know that $f_k(n)$ doesn't clock over for any $n \in \left\{n_{r-1}+1, n_{r-1} + 2, ..., n_r - 1\right\}$.

And because it doesn't clock over, we can remove the mod operator in equation 4, which simplifies to: $$ \begin{align*} f_k(n) &= [f_k(n-1) + k - 1 ] \bmod n + 1 & \text{from equation 4}\\ &= [f_k(n-1) + k - 1 ] + 1 \\ &= f_k(n-1) + k & \forall n \in \left\{n_{r-1}+1, n_{r-1} + 2, ..., n_r - 1\right\} \\ \\ \therefore f_k(n_{r-1} + i) &= f_k(n_{r-1}) + ik &\forall i \in \left\{0, 1, \ldots, n_r - n_{r-1}-1 \right\} \tag{6} \\ \\ \text{In particular:} \\ f_k(n_r - 1) &= f_k(n_{r-1}) + k.(n_r - n_{r-1} - 1) \tag{7} \\ \end{align*} $$

Equations 5 and 7 give us two different formulae for $f_k(n_r-1)$, so we can combine them: $$ \begin{align*} n_r - j &= f_k(n_{r-1}) + k.(n_r - n_{r-1} - 1)\\ \Leftrightarrow (k-1).n_r &= k.n_{r-1} + k - j - f_k(n_{r-1}) \tag{8} \end{align*} $$

First we are going to use equation 8 to determine the value of j.

We can re-arrange equation 8 as follows: $$ \begin{align*} j - 1 & = k.n_{r - 1} + k - 1 - f_k(n_{r-1}) - (k-1).n_r \\ & = [(k-1)+1].n_{r - 1} + (k-1) - f_k(n_{r-1}) - (k-1).n_r \\ & = n_{r-1} - f_k(n_{r-1}) + (k-1).[n_{r - 1} + 1 - n_r] \tag{9} \end{align*} $$

Why $j-1$ on the left side of the equation?

Recall from equation 5 that $1 \leq j \leq k-1 $. So $0 \leq j - 1 \leq k-2 < k-1$.

And hence $j - 1 = (j - 1) \bmod (k - 1)$. This allows us to simplify the right hand side of equation 9 by taking the "$\bmod (k-1)$" of both sides.

So: $$ \begin{align*} j - 1 & = [j - 1] \bmod (k - 1) \\ & = [n_{r-1} - f_k(n_{r-1}) + (k-1).[n_{r - 1} + 1 - n_r]] \bmod (k - 1) & \text{from equation 9} \\ & = [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) & \text{since multiples of }k - 1\text{ can be removed} \\ \\ \Rightarrow j & = [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) + 1 \tag{10} \end{align*} $$

Now that we have a formula for j, we can determine both $n_r$ (using equation 8) and $f_k(n_r)$ (using equations 4 and 5).

Let's determine $n_r$ first. Substituting equation 10 into equation 8 gives:

$$ \begin{align*} (k-1).n_r &= k.n_{r-1} + k - j - f_k(n_{r-1}) &\text{from equation 8} \\ &= k.n_{r-1} + k - ([n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) + 1) - f_k(n_{r-1}) &\text{from equation 10} \\ &= [(k-1) + 1].n_{r-1} + (k-1) - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) - f_k(n_{r-1}) \\ &= (k-1).n_{r-1} + n_{r-1} + (k-1) - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) - f_k(n_{r-1}) \\ &= (k-1)[n_{r-1} + 1] + n_{r-1} - f_k(n_{r-1}) - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) \\ \Rightarrow n_r &= n_{r-1} + 1 + \frac{n_{r-1} - f_k(n_{r-1}) - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) }{k-1} \\ \end{align*} $$ This rather complicated quotient can be simplified quite significantly by observing that: $$ \frac{m - m \bmod d}{d} = \lfloor \frac{m}{d} \rfloor $$

This follows by expressing $m$ as: $$ \begin{align*} m &= qd+r & \text{where }0 \leq r < d &\text{i.e. }r = m \bmod d \\ \Rightarrow q & = \frac{m - r}{d} \\ & = \frac{m - m \bmod d}{d} \\ \text{But: } q = \lfloor \frac{m}{d} \rfloor &\text{and the result follows} \end{align*} $$

So substituting $n_{r-1} - f_k(n_{r-1})$ for $m$ and $k-1$ for $d$ we obtain the following simplification:

$$ n_r = n_{r-1} + 1 + \lfloor \frac{n_{r-1} - f_k(n_{r-1}) }{ k - 1 } \rfloor \tag{11} $$

Now let's determine the value of $f_k(n_r)$:

$$ \begin{align*} f_k(n_r) &= [f_k(n_r - 1) + k - 1 ] \bmod n_r + 1 & \text{from equation 4} \\ &= [(n_r - j) + k - 1 ] \bmod n_r + 1 & \text{from equation 5} \\ &= [k - 1 - j] \bmod n_r + 1 \\ &= [k - 1 - ([n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) + 1)] \bmod n_r + 1 & \text{from equation 10} \\ &= [k - 2 - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1)] \bmod n_r + 1 \end{align*} $$

This is already sufficient. However we can simplify things further. When $n_r \ge k-1$, the $ \text{mod }n_r $ can be dropped. This gives the following set of formulae:

$$ \begin{align*} f_k(n_{1}) & = f(1) = 1 \tag{12} \\ f_k(n_r) & = k - 1 - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) & \text{ where } n_r \geq k - 1 \tag{13} \\ f_k(n_r) & = [k - 2 - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1)] \bmod n_r + 1 & \forall n_r \text{ where } r > 1 \tag{14} \\ \end{align*} $$

So equations 11 through to 14 provide formulae for:

- $n_r$ in terms of $n_{r-1}$ and $f_k(n_{r-1})$

- $f_k(n_r)$ in terms of $n_r$, $n_{r-1}$ and $f_k(n_{r-1})$

- $n_1$ and $f_k(n_1)$

With these pieces in place we are ready to create a more efficient algorithm for calculating $f_k(n)$ for arbitrary n.

The row-based algorithm

Given a value $n$ for which we wish to calculate $f_k(n)$, we can't work backwards down to $f_k(1)$ as we have done in the past. The problem is that we don't know which row $r$ it falls within, or what $n_r$ and $f(n_r)$ are.Instead we are going to have to work our way forwards to find the row that $n$ belongs in. We will start at $n_{1} = 1, f_k(1) = 1$. From this we will derive $n_{2}$ and $f(n_{2})$. We will continue generating this sequence of values for $n_r$ and $n_{r+1}$ until we find a value of $r$ for which $n_r \le n < n_{r+1}$. When we do, we can use equation 6 to determine $f_k(n)$: $$ f_k(n) = f_k(n_r) + k.(n-n_r) \tag{15} $$

While working our way through the rows we will keep track of the values for the next row: $n_{r+1}$ and $f_k(n_{r+1})$. This will allow us to easily calculate $n_{r+2}$ and $f_k(n_{r + 2})$.

Below is an F# function to calculate these values for the next row:

let getNextRowStartSizeAndLabel thisRowStartSize thisRowStartLabel interval =

let floor

= int( System.Math.Floor( double(thisRowStartSize - thisRowStartLabel)

/ double(interval - 1) )

)

let nextRowStartSize = thisRowStartSize + 1 + floor

let nextRowStartLabel =

if nextRowStartSize < interval - 1 then

( interval - 2

- (thisRowStartSize - thisRowStartLabel) % (interval - 1)

) % nextRowStartSize + 1

else

interval - 1 - (thisRowStartSize - thisRowStartLabel) % (interval - 1)

(nextRowStartSize, nextRowStartLabel)

The variables map to the formula as follows:

- thisRowStartSize $\mapsto n_r$

- thisRowStartLabel $\mapsto f_k(n_r)$

- interval $\mapsto k$

- nextRowStartSize $\mapsto n_{r+1}$

- nextRowStartLabel $\mapsto f_k(n_{r+1})$

The following F# functions use the function above to calculate $f_k(n)$:

let rec getLabelOfLastLeftInCircleWithIntervalByRow

thisRowStartSize thisRowStartLabel

nextRowStartSize nextRowStartLabel

interval sizeOfCircle =

if (sizeOfCircle >= thisRowStartSize) && (sizeOfCircle < nextRowStartSize) then

thisRowStartLabel + interval * (sizeOfCircle - thisRowStartSize)

else

let (newNextRowStartSize, newNextRowStartLabel)

= getNextRowStartSizeAndLabel nextRowStartSize nextRowStartLabel interval

getLabelOfLastLeftInCircleWithIntervalByRow

nextRowStartSize nextRowStartLabel

newNextRowStartSize newNextRowStartLabel

interval sizeOfCircle

let calcLastLeftInCircleWithIntervalByRow interval sizeOfCircle =

if sizeOfCircle < 1 then

raise (System.ArgumentException("The size of the circle must be positive!"))

if interval < 2 then

raise (System.ArgumentException("The interval must be 2 or more!"))

let (nextRowStartSize, nextRowStartLabel) = getNextRowStartSizeAndLabel 1 1 interval

getLabelOfLastLeftInCircleWithIntervalByRow

1 1 nextRowStartSize nextRowStartLabel interval sizeOfCircle

The efficiency of the row-based algorithm

Look back at equation 11:$$ n_r = n_{r-1} + 1 + \lfloor \frac{n_{r-1} - f_k(n_{r-1})}{k-1} \rfloor $$

Consider what happens as $n_{r-1}$ starts becoming large compared to $k$. $f_k(n_{r-1}) \le k$ (since a "clock-over" occurs at $n_{r-1}$), so this term will become very small. So as $n_{r-1}$ becomes large:

$$ n_r \approx (1 + \frac{1}{k-1}).n_{r-1} $$

So the $\left\{n_r\right\}$ series is approximately a geometric progression. Since its values are going to grow exponentially, the algorithm will rapidly find the row containing n, even for very large values of n. The size of the stack is going to scale as O(log n), so we are far less likely to reach a value of n which will cause stack overflows.

If we run the latest F# algorithm it is indeed blindingly fast:

It was able to handle some very large 32 bit numbers almost instantaneously.

I would expect it to fail when the next higher $n_r$ value is larger than the maximum 32 bit integer. It might be possible to work around this by inserting a "try catch" block to detect and recover from this situation, since we can still calculate the answer using the previous value of $n_r$.

I thought that another failure condition could be with very large values of $k$, since the exponential growth rate would be very small when $k$ is large. So I tested the algorithm with $k$ and $n$ both equal to a billion (one thousand million). The calculation took slightly under 32 seconds on my i5 laptop.

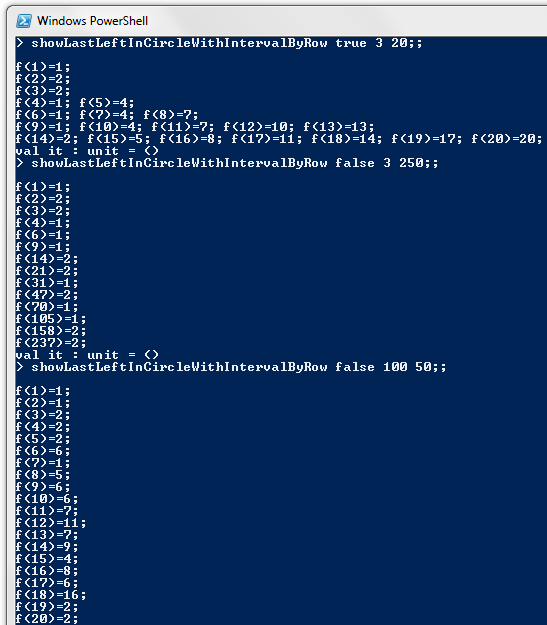

Displaying the rows in the row-based algorithm

Ideally we would like a closed form solution for arbitrary k. I tried looking for patterns in the rows for various values of k, but I couldn't see a consistent pattern.For anyone who would like to try, or just if you're curious to see the row structure in action, here is an F# script to allow you to see all $(n, f_k(n))$ pairs arranged in rows. It depends on the function getNextRowStartSizeAndLabel defined earlier, so make sure that function has been defined in your F# interactive session:

let rec showLabelsOfLastLeftInCircleWithIntervalByRow

showFullRow thisRowStartSize thisRowStartLabel

nextRowStartSize nextRowStartLabel interval

sizeOfCircle maxSizeOfCircle =

if (sizeOfCircle <= maxSizeOfCircle) then

let result = thisRowStartLabel + interval * (sizeOfCircle - thisRowStartSize)

if sizeOfCircle = thisRowStartSize then

System.Console.WriteLine()

System.Console.Write( "f({0})={1}; ", sizeOfCircle, result)

elif showFullRow then

System.Console.Write( "f({0})={1}; ", sizeOfCircle, result)

if sizeOfCircle < nextRowStartSize - 1 then

showLabelsOfLastLeftInCircleWithIntervalByRow

showFullRow thisRowStartSize thisRowStartLabel

nextRowStartSize nextRowStartLabel

interval (sizeOfCircle+1) maxSizeOfCircle

else

let (newNextRowStartSize, newNextRowStartLabel) =

getNextRowStartSizeAndLabel nextRowStartSize nextRowStartLabel interval

showLabelsOfLastLeftInCircleWithIntervalByRow

showFullRow nextRowStartSize nextRowStartLabel

newNextRowStartSize newNextRowStartLabel

interval (sizeOfCircle+1) maxSizeOfCircle

let showLastLeftInCircleWithIntervalByRow showFullRow interval maxSizeOfCircle =

if maxSizeOfCircle < 1 then

raise

( System.ArgumentException(

"The maximum size of the circle must be 1 or more!")

)

if interval < 2 then

raise

( System.ArgumentException(

"The interval must be 2 or more!")

)

let (nextRowStartSize, nextRowStartLabel) =

getNextRowStartSizeAndLabel 1 1 interval

showLabelsOfLastLeftInCircleWithIntervalByRow

showFullRow 1 1

nextRowStartSize nextRowStartLabel interval

1 maxSizeOfCircle

System.Console.WriteLine()

When the showFullRow parameter to the function is false, then only the first pair in each row will be shown. I find this is a lot more useful than seeing the intermediate values as well, which soon leads to each console line overflowing.

Validating the various algorithms against one other

The following F# script can be used to check that the 3 algorithms produce the same answers:

type circleCalculationWithInterval = {

SizeOfCircle: int;

Interval: int;

LastLeftInCircleByBruteForce: int;

LastLeftInCircleByRecursiveFormula: int;

LastLeftInCircleByRow: int

}

let getCircleCalculationWithInterval includeBruteForce interval sizeOfCircle =

// Brute force is slow to calculate. Provide the option to omit it...

let lastLeftInCircleByBruteForce =

match includeBruteForce with

| true -> calcLastLeftInCircleWithIntervalByBruteForce interval sizeOfCircle

| false -> 0

let lastLeftInCircleByRecursiveFormula =

calcLastLeftInCircleWithIntervalByRecursiveFormula interval sizeOfCircle

let lastLeftInCircleByRow =

calcLastLeftInCircleWithIntervalByRow interval sizeOfCircle

let circleCalc = {

SizeOfCircle = sizeOfCircle;

Interval = interval;

LastLeftInCircleByBruteForce = lastLeftInCircleByBruteForce;

LastLeftInCircleByRecursiveFormula = lastLeftInCircleByRecursiveFormula;

LastLeftInCircleByRow = lastLeftInCircleByRow

}

circleCalc

You can then check the results using snippets similar to the following:

// With brute force:

[1..1000] |> List.map (getCircleCalculationWithInterval true 2) |> List.filter (

fun cc -> cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByBruteForce

|| cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByRecursiveFormula

);;

[1..100] |> List.map (getCircleCalculationWithInterval true 100) |> List.filter (

fun cc -> cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByBruteForce

|| cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByRecursiveFormula

);;

// Without brute force:

[1..10000] |> List.map (getCircleCalculationWithInterval false 2) |> List.filter (

fun cc -> cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByRecursiveFormula

);;

[1..10000] |> List.map (getCircleCalculationWithInterval false 100) |> List.filter (

fun cc -> cc.LastLeftInCircleByRow <> cc.LastLeftInCircleByRecursiveFormula

);;

A new proof for the formula when removing every second person

We can use the general formulae to provide a new derivation of the closed form solution when every second person is removed from the circle.The proof ends up being remarkably simple, because $k - 1 = 1$. Terms such as $(...) \bmod (k-1)$ simply fall away, since any integer mod 1 is zero. Also some terms involve a division by $k-1$, and these also simplify very nicely.

But these simplifications only work for $k = 2$. This reduces my confidence of finding a closed form solution for the general case.

Anyway, here's the proof:

$$ \begin{align*} f_2(n_r) & = k - 1 - [n_{r-1} - f_k(n_{r-1})] \bmod (k - 1) & \text{ from equation 13, since } n_r \geq k - 1 = 1 \\ & = 2 - 1 - [n_{r-1} - f_2(n_{r-1})] \bmod 1 & \text{(but any number mod 1 is 0)} \\ & = 1 & \text{ for }n_r > 1 \\ \\ \text{but: } f_2(n_{1}) & = f(1) = 1 & \text{from equation 12} \\ \\ \therefore f_2(n_r) & = 1 & \forall n_r \tag{16} \\ \\ n_r & = n_{r-1} + 1 + \lfloor \frac{n_{r-1} - f_k(n_{r-1}) }{ k - 1 } \rfloor & \text{ from equation 11} \\ & = n_{r-1} + 1 + \lfloor n_{r-1} - f_k(n_{r-1}) \rfloor & \\ & = n_{r-1} + 1 + n_{r-1} - f_k(n_{r-1}) & \text{(since floor has an integer argument)} \\ & = n_{r-1} + 1 + n_{r-1} - 1 & \text{since }f_k(n_{r-1}) = 1 \text{ by equation 16 } \\ & = 2.n_{r-1} & \text{a geometric progression} \tag{17} \\ \\ \therefore n_r & = 2^{r-1} & \text{by equations 16 and 17} \tag{18} \\ \\ f_2(n) & = f_2(n_r) + 2.(n-n_r) & \text{from equation 15, where: } n_r \le n < n_{r+1} \\ & = 1 + 2(n - 2^{r-1}) & \text{ where: } 2^{r-1} \le n \le 2^r \text{(by equation 18)}\\ & = 1 + 2n - 2.2^{r-1} & \text{where: } 2^{r-1} \le 2^{log_2 n} < 2^r \\ & = 1 + 2n - 2.2^{\lfloor log_2 n \rfloor} & \text{since: } 2^{r-1} = 2^{\lfloor log_2 n \rfloor} < 2^{r}\\ & = 2n + 1 + 2^{{\lfloor log_2 n \rfloor} + 1} \tag{19} \\ \end{align*} $$

And this is the same equation derived in blog post three in the series, albeit through a completely different method.

Conclusion

In this blog post and the previous post I derived formulae which are applicable to intervals other than 2.I was able to use these formulae to find a new proof of the closed form solution for the original interval of 2 (i.e. removing every second person from the circle).

I wasn't able to derive a closed form solution for arbitrary intervals. However I was able to devise an efficient algorithm for calculating the answer.

Next time

I feel that I have progressed as far as I can with the problem on my own. I don't know whether a closed form solution exists. But even if it does, I doubt I'm going to find it.So now it's time to search the internet to see if this is a problem which other people have solved. In my next blog post, I hope to report back on what I found.

I'm also hoping to share some of the scripts and tools I used to generate the artefacts in this series of blog posts.

But most of all, I'm looking forward to wrapping up this series, so that I can move on to other topics that capture my interest!

Thursday, June 06, 2013

Seven potato (generalizing to other intervals)

All posts in this series:

- One Potato: A description of the problem

- Two potato: The basic equations

- Three potato: An algebraic formula for the last person left

- Four: A neat calculation method using binary numbers

- Five potato: F# functions to check the various formulae

- Six potato: Using a calculation graph to see the pattern

- Seven potato: Generalizing to other intervals

- More: An efficient algorithm for arbitrary intervals

- Online research: In which I discover that it is called the Josephus problem

Introduction

In the previous posts in the series I derived Mathematical formulae for calculating the last person left in a circle if every second person is asked to leave (continuing until only one person remains).But what if we want to remove every third person from the list, or every eighth person (as in the "One Potato, Two potato" children's game)?

In this post and the next I will attempt to generalize the results to arbitrary intervals.

The challenge

Let $f_k(n)$ be the label of the last person left in a circle of $n$ people when every $k^{\text{th}}$ person is asked to leave.Clearly $f_k(1) = 1$.

When the interval is two, the basic equations allowed us to express $f_{2}(2n+b)$ in terms of $f_{2}(n)$. My gut feel is that this same method won't be useful for an interval of $k$, because it will only allow us to express $f_k(kn+d)$ in terms of $f_k((k-1)n)$, not in terms of $f_k(n)$.

Instead I'm going to find a way to express $f_k(n)$ in terms of $f_k(n-1)$.

Interestingly, the inspiration for doing this comes from the generalization of the F# brute force calculation method presented in the fifth blog post in the series. So not only have the functional algorithms proved useful for checking the Mathematics. They have also led to new Mathematical insights!

Brute force computation

So to recap, let's write an F# script which generalizes the brute force calculation method to arbitrary intervals:let rec getLastLabelInCircleWithIntervalByBruteForce numberToSkip interval circle =

match (numberToSkip, circle) with

| (_, current :: []) -> current

| (0, current :: restOfCircle)

-> getLastLabelInCircleWithIntervalByBruteForce

(interval-1) interval restOfCircle

| (negativeToSkip, _) when negativeToSkip < 0

-> raise (System.ArgumentException("The number to skip can't be negative!"))

| (positiveToSkip, current :: restOfCircle)

-> getLastLabelInCircleWithIntervalByBruteForce

(positiveToSkip-1) interval (restOfCircle @ [current])

| (_,_) -> raise (System.ArgumentException("The circle mustn't be empty!"))

let rec calcLastLeftInCircleWithIntervalByBruteForce interval sizeOfCircle =

getLastLabelInCircleWithIntervalByBruteForce

(interval-1) interval [1 .. sizeOfCircle]

The method recursively skips a person and moves them to the end of the list until it reaches the next person to remove from the circle. It removes that person and starts over with the next person to skip being at the front of the list.

It runs in a similar time to the previous brute force algorithm.

A recursive formula

Let's consider what happens when we have $n$ people in a circle, and we remove the next person. Let $m$ be the label of the next person removed from the circle. As with the F# algorithm, after removing person $m$, we will shift the entire circle around so that person $m+1$ is the first person in the list (or person 1 if $m = n$).So after removing person $m$ and shifting everyone around, we are left with $n-1$ people in the circle: $$ \begin{array}{| l | c | c | c | c | c | c | c |} \hline \text{Index: }& 1 & 2 & \ldots & n-m & n-m+1 & \ldots & n-1\\ \text{Label: }& m+1 & m+2 & \ldots & n & 1 & \ldots & m-1\\ \hline \end{array} $$

We will be using the "mod" (modulo) operator to handle the clocking over from position n to position 1. To do this we need to modify the labels to be zero-based:

$$ \begin{array}{| l | c | c | c | c | c | c | c |} \hline \text{Index: }& 1 & 2 & \ldots & n-m & n-m+1 & \ldots & n-1\\ \text{Label - 1: } & m & m+1 & \ldots & n-1 & 0 & \ldots & m-2\\ \hline \end{array} $$

Note that $n-1 = (n-1) \bmod n$ and $0 = n \bmod n$. This suggests a way of expressing all the labels in a uniform manner:

$$ \begin{array}{| l | c | c | c | c | c | c | c |} \hline \text{Index: } & 1 & 2 & \ldots & n-m & n-m+1 & \ldots &n-1\\ \text{Label - 1: } & m\bmod n & (m+1)\bmod n & \ldots & (n-1)\bmod n & n\bmod n & \ldots & (n+m-2)\bmod n\\ \hline \end{array} $$

Now is a good time to make the labels one-based again. I'll also rearrange the terms slightly to make the mapping from indexes to labels more obvious:

$$ \begin{array}{| l | c | c | c | c |} \hline \text{Index: }& 1 & 2 & \ldots & n-1\\ \text{Label:} & [1+(m-1)]\bmod n + 1 & [2+(m-1)]\bmod n + 1 & \ldots & [(n-1) + (m-1)]\bmod n + 1\\ \hline \end{array} $$

This gives us a way of mapping from indexes to labels. The $i^{\text{th}}$ label in the list is $[i+(m-1)] \bmod n + 1$.

There are now $n-1$ people in the circle. So the index of the last person left will be $f_k(n-1)$. Hence the label of the last person left will be $[f_k(n-1)+m-1]\bmod n + 1$. Hence:

$$ f_k(n) = [f_k(n-1)+m-1]\bmod n + 1 \tag{1} $$

$m$ is the label of the first person removed from the circle of n people. How do we determine $m$?

If $k \le n$ then $m = k$. But what if $k > n$? In this case the circle will be circumnavigated one or more times before the $k^{\text{th}}$ person is eliminated.

We can use the modulo operator to determine the value of m, remembering that we must first zero-base the label, then apply the modulo operator, then add back 1 to the label at the end. So:

$$ \begin{align*} m = & (k-1) \bmod n + 1 & \text{for }k > n \\ m = & k & \text{for } k \le n \end{align*} $$

But these two equations can be collapsed into one, because when $k \le n$ then $k-1 < n$, so $k = (k-1) \bmod n + 1$. So this gives: $$ m = (k-1) \bmod n + 1 \tag{2} $$

Substituting into equation 1, we get the following:

$$ \begin{align*} f_k(n) &= [f_k(n-1) + ((k - 1) \bmod n + 1) - 1] \bmod n + 1 \\ &= [f_k(n-1) + (k - 1) \bmod n ] \bmod n + 1 \tag{3} \\ \text{So: }\\ f_k(n) &= [f_k(n-1) + k - 1 ] \bmod n + 1 \tag{4} \end{align*} $$

As promised, we have a recursive formula for $f_k(n)$ in terms of $f_k(n-1)$.

F# code for the recursive formula

The following F# function implements the recursive formula:let rec calcLastLeftInCircleWithIntervalByRecursiveFormula interval sizeOfCircle =

match sizeOfCircle with

| 1 -> 1

| n when n > 1

-> ( calcLastLeftInCircleWithIntervalByRecursiveFormula

interval (sizeOfCircle-1)

+ interval - 1

) % sizeOfCircle

+ 1

| _ -> raise (System.ArgumentException("The size of the circle must be positive!"))

This function runs much faster than the brute force approach. However it has a significant flaw. On my machine, a stack overflow occurs at some value of $n$ between 60 000 and 70 000. This is not really surprising, since the size of the stack is O(n) and the recursive call is not tail recursive.

By contrast, the recursive formula from the fifth post in the series was O(log n) in the stack size, since it expressed $f_{2}(2n+b)$ in terms of $f_{2}(n)$.

Next time

My next blog post will be a continuation of this topic.I will be deriving a more efficient algorithm which has O(log n) recursive calls rather than O(n). This will not only solve the stack overflow problem. It will also make the algorithm incredibly fast.

Subscribe to:

Comments (Atom)